Isaac Sim for Drone Simulation

Setting up a drone with onboard depth camera + LiDAR for indoor drone localization in Isaac Sim 5.0.

1) Goal & Context

This post starts documenting how I set up Isaac Sim 5.0 to prototype indoor, GPS‑denied drone localization pipelines. I wanted a way to:

- Simulate autonomous drone navigation,

- Visualize camera and LiDAR sensor data,

- Test out GPS‑denied localization algorithms with both known‑map and unknown‑map workflows.

This short tutorial video below was a great start for me:

- Video: “Get Started with Isaac Sim 5.0 (pip install)” — https://www.youtube.com/watch?v=NV2hqw8wu3U

2) Install Isaac Sim (pip)

2.1 Prerequisites (Workstation Specs)

Nvidia lists the following requirements for running Isaac Sim 5.x1:

- OS: Ubuntu 22.04/24.04 (Linux x64) or Windows 10/11

- CPU: 7th‑gen Intel i7 / Ryzen 5 or better (9th‑gen i7 / Ryzen 7 recommended; i9/Ryzen 9 ideal)

- RAM: 32 GB minimum (64 GB recommended)

- GPU: Recent GeForce RTX/RTX Ada or pro‑series RTX, with ≥16 GB VRAM for heavier scenes

- Storage: Fast SSD; dozens of GB free for assets and caches

You can verify your machine using the Isaac Sim Compatibility Checker. In my case, I have a dual-boot desktop PC with Ubuntu 22.04 installed on its own SSD, and that meets Nvidia’s requirements. I also already had ROS2 Humble installed.

2.2 Create a Python 3.11 environment (Ubuntu 22.04)

Depending on whether you wish to use Isaac Sim 5.x (latest) or Isaac Sim 4.x (supports relevant plugins such as Pegasus which currently aren’t supported on 5.x yet at time of writing), keep in mind that Isaac Sim 5.x requires Python 3.11, and Isaac Sim 4.x requires Python 3.10.

# Create a working folder (will create virtual env and pip instal)

mkdir -p ~/isaacsim && cd ~/isaacsim

# Ensure Python 3.11 is available

sudo apt update

sudo apt install -y python3.11 python3.11-venv python3.11-dev

# Create & activate a virtual environment

python3.11 -m venv ~/venvs/isaacsim-5

source env_isaacsim/bin/activate

python -V # expect 3.11.x

2.3 Install Isaac Sim 5.0 (pip) and Launch

It is also possible to download and install from the Isaac Sim Documentation website, but doing pip install with your virtual environment sourced allows it to be contained in that environment, and if needed you’d be able to easily install another version of Isaac Sim within a separate environment with few issues.

# From inside the venv

pip install --upgrade pip

pip install isaacsim[all,extscache]==5.0.0 --extra-index-url https://pypi.nvidia.com

Launch with:

isaacsim

3) ROS 2 Bridge Setup (Humble)

I initially had an issue where the ROS 2 bridge didn’t start because my system‑installed ROS 2 conflicted with Isaac Sim’s internal ROS libraries. The fix was to use Isaac Sim’s internal ROS 2 and FastDDS, and avoid sourcing the system ROS2 (which runs Python 3.10) in that terminal.

# (Optional) Activate the venv you installed Isaac Sim into

source env_isaacsim/bin/activate

# Point Isaac Sim’s ROS 2 bridge at its internal Humble runtime

export ROS_DISTRO=humble

export RMW_IMPLEMENTATION=rmw_fastrtps_cpp

# IMPORTANT: use the internal bridge libs that ship with Isaac Sim

# Replace $ISAACSIM_PY with your site-packages path for the venv

export ISAACSIM_PY=$(python -c "import site; print(site.getsitepackages()[0])")

export LD_LIBRARY_PATH="$LD_LIBRARY_PATH:$ISAACSIM_PY/isaacsim/exts/isaacsim.ros2.bridge/humble/lib"

# Ensure you DON'T pull in system ROS 2 in this terminal

unset AMENT_PREFIX_PATH COLCON_PREFIX_PATH

# Launch Isaac Sim

isaacsim

Also check:

Window → Extensions, look upros2and verifyisaacsim.ros2.bridgeis enabled.

When using separate shells for rviz2, rqt, or other ROS2 nodes, start by sourcing ROS2, and ensure you have a consistent domain ID:

# Source ROS2

source /opt/ros/humble/setup.bash

# In every ROS 2 shell you’ll use with this sim:

export ROS_DOMAIN_ID=42 # I actually used the default 0 so no need for this

# (Optional) persist it

echo 'export ROS_DOMAIN_ID=42' >> ~/.bashrc

4) Add an environment, drone, and sensors

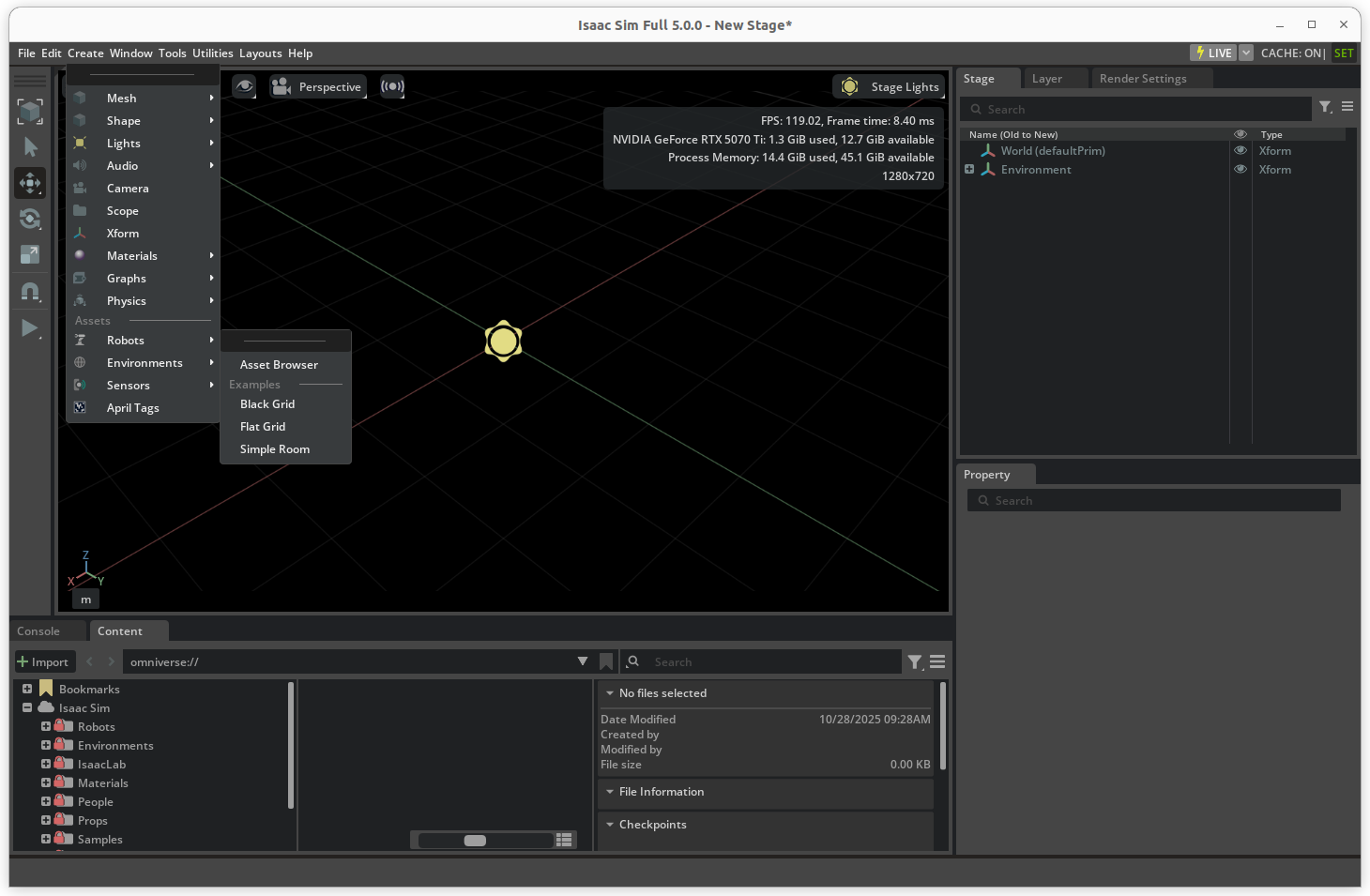

4.1 Create an environment

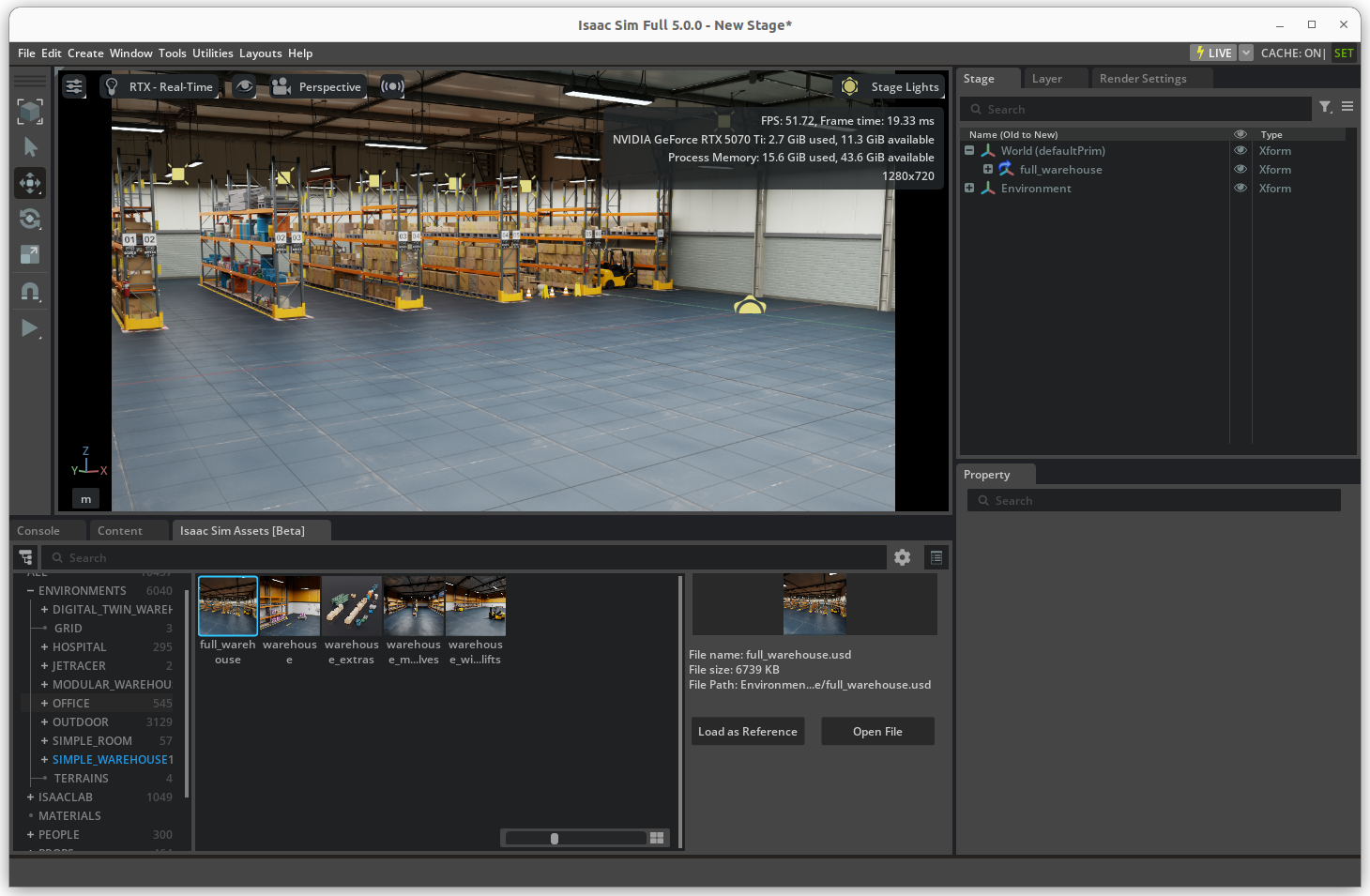

Once the simulator is started up (this can take some time for the first launch of Isaac Sim), create an environment for a drone to interact with. You can start with a Ground Plane with physics/collisions enabled, or browse and import one of Isaac Sim’s environment assets (like its high-fidelity warehouse). You can also 3D scan your own room with your phone (using apps like Polycam or Scaniverse), edit in Blender and literally drag and drop it inside the simulator.

In Isaac Sim:

Create → Environments → Asset Browser

Create → Environment → Asset Browser.

You’ll then be able to browse all of Nvidia’s assets under the Isaac Sim Assets (Beta) tab in the bottom left. Simply drag and drop the warehouse into the stage/viewport.

Drag and drop the warehouse into the stage.

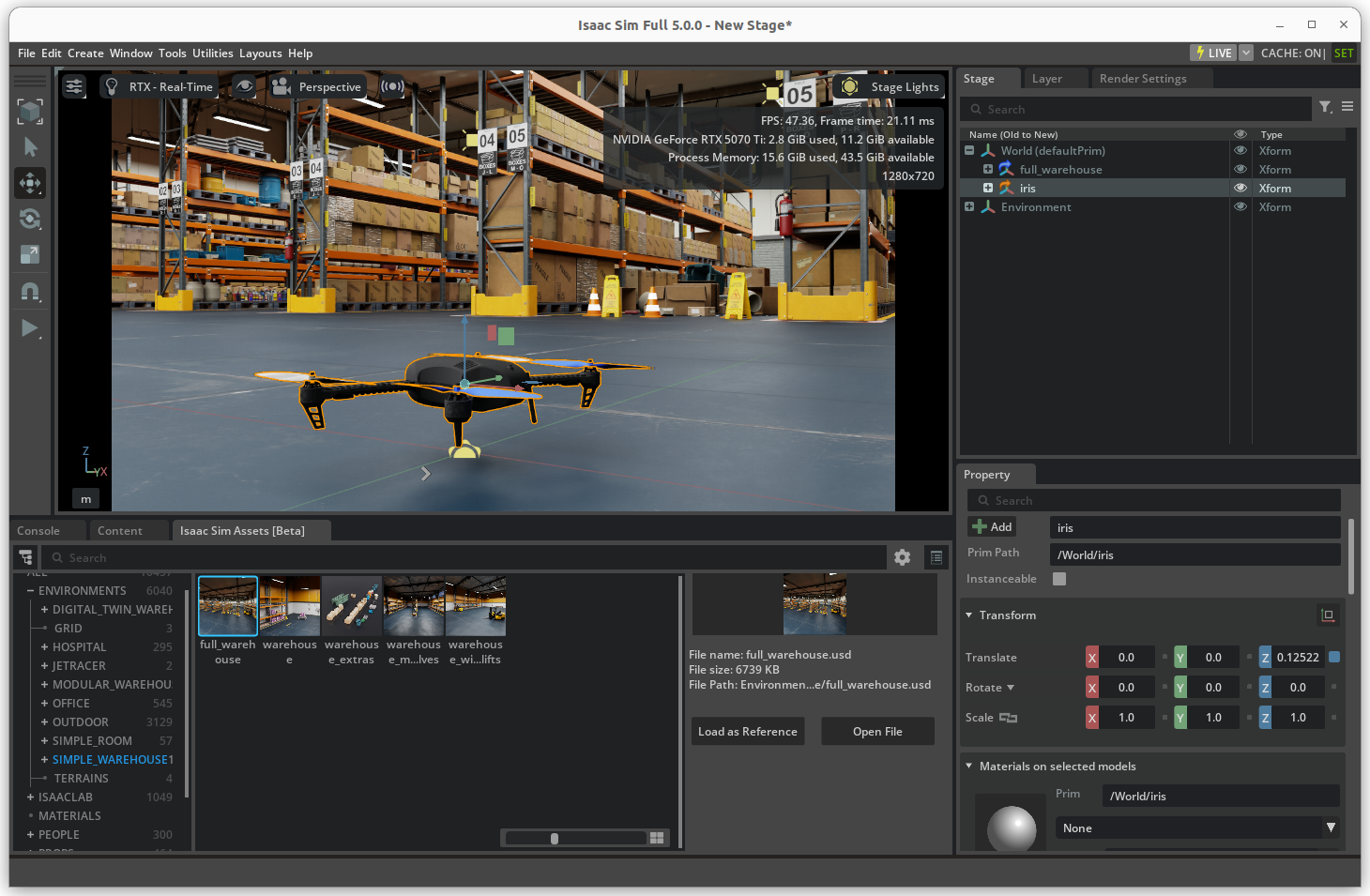

4.2 Bring in a reference quad (Iris)

A convenient, open asset is the Iris quad from Pegasus Simulator2 (built on Isaac Sim). Download iris.usd from the repo here and add it to your scene. You can also use one of Isaac Sim’s drone assets (like the Crazyflie) or import your own.

In Isaac Sim:

File → Add Reference… → iris.usd

Drag and drop Iris drone.

Press Play: the drone will fall if it’s not constrained/controlled yet—that’s expected.

4.3 Add sensors and parent them to the body

- Under the asset browser, select and drag a Camera (RealSense D455) and a LiDAR sensor (Hesai XT32 for fun) into the stage.

Browse sensors in Asset Browser, then drag and drop on drone.

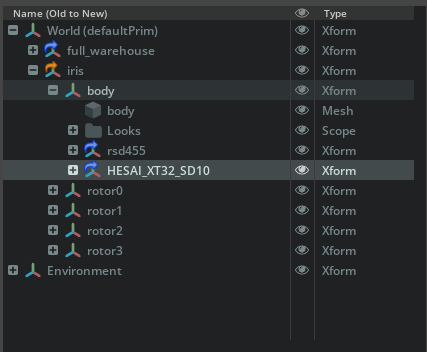

- In the Stage tree, drag the sensor prims under the drone’s body (e.g.,

/World/Iris/base_link) so they move with the vehicle when sim starts.

Drag the imported sensors under the drone's body prim so that they move with the drone during simulation.

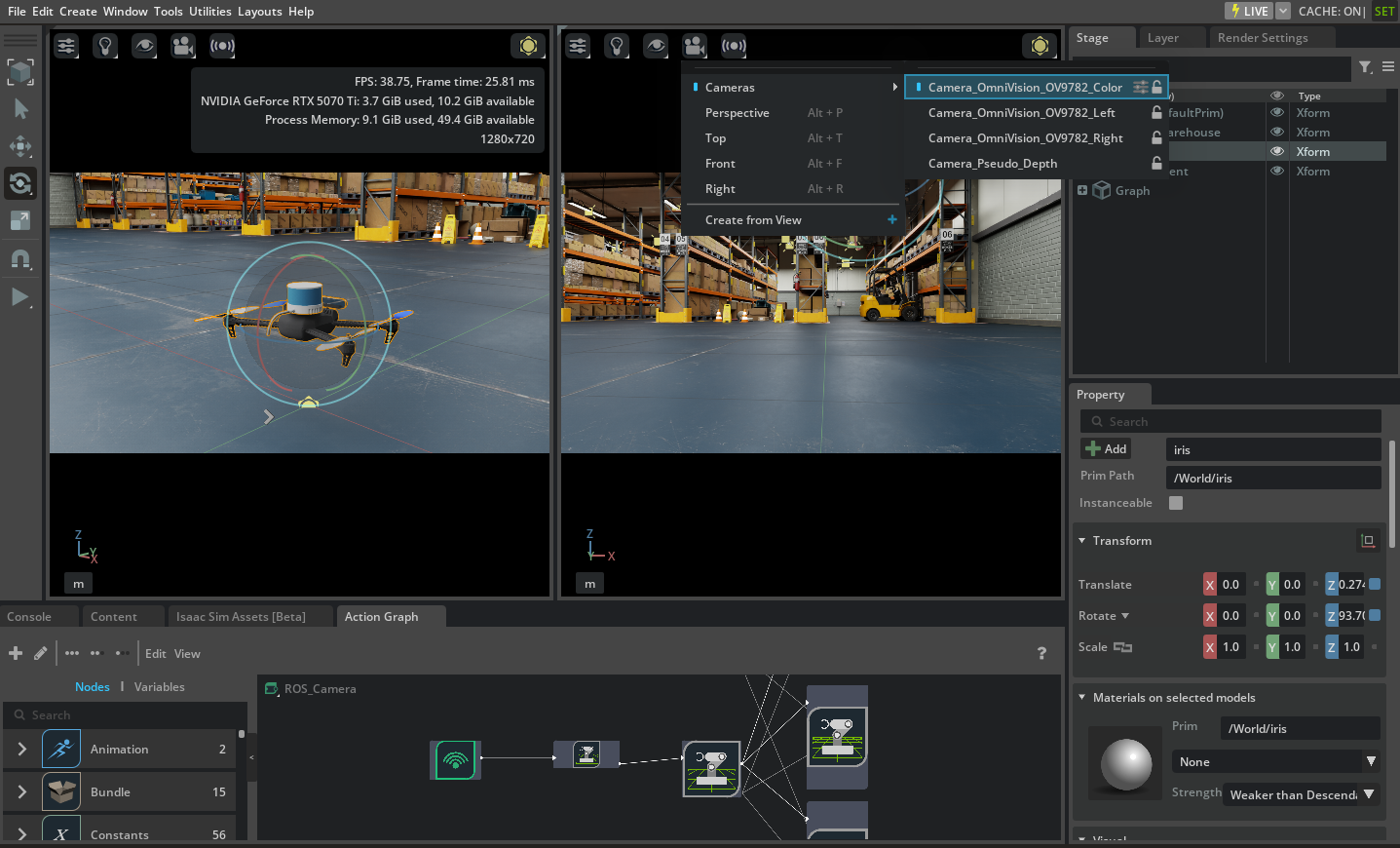

- You can preview a camera feed directly in Isaac Sim via

Window → Viewport → Viewport 2, click the camera icon and then select the newly imported camera.

4.4 Publish to ROS 2 topics

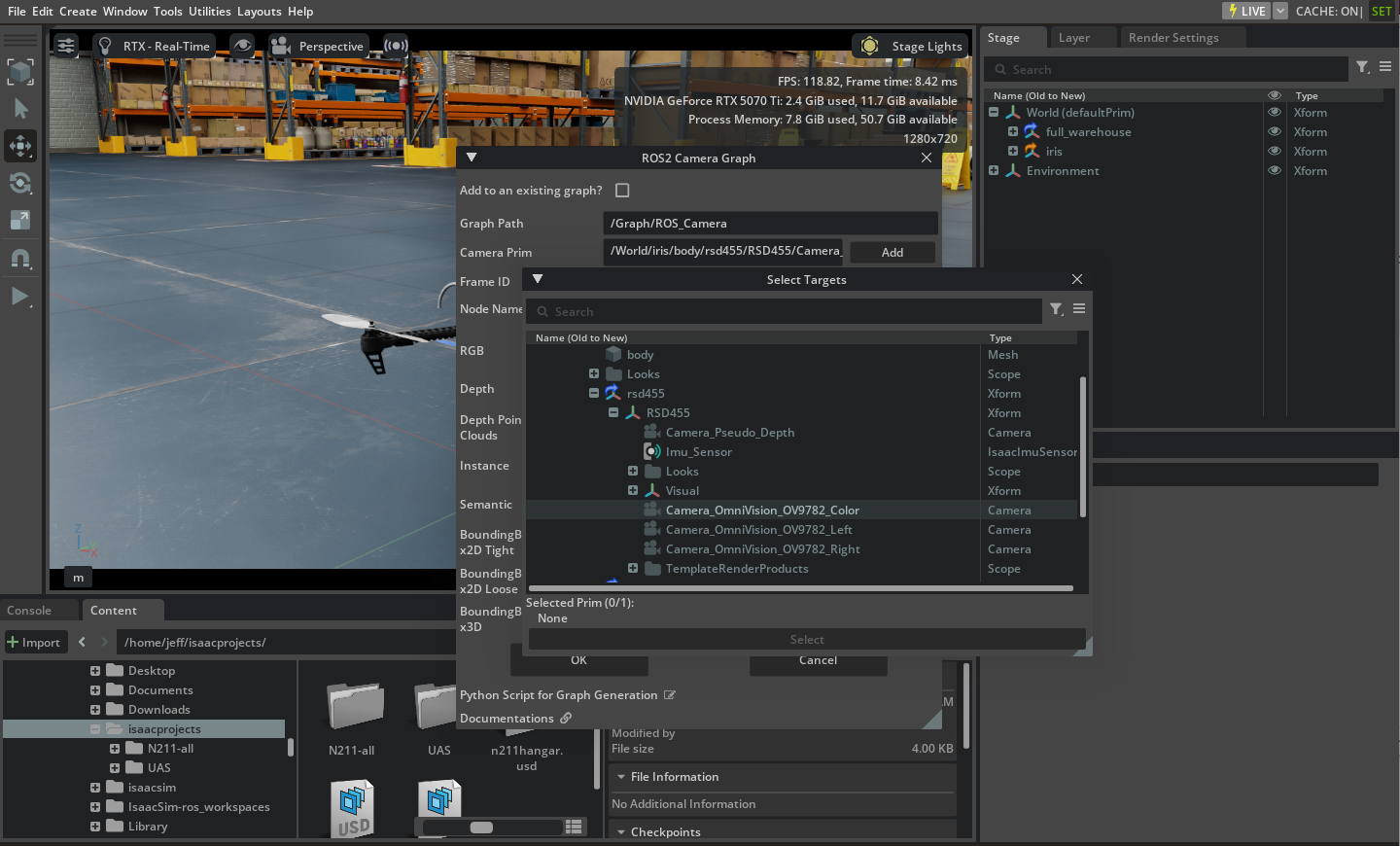

With the ROS 2 Bridge enabled, setup ROS2 publishers to stream the camera and LIDAR sensor data. This can be done fairly easily via Tools → Robotics → ROS2 OmniGraphs → Camera or RTXLidar Make sure to do the following:

- Set the

Camera Primto the path to your actual sensor (with typeCameraorLIDAR) - Set the

Frame IDto either the drone body prim or the sensor’s main prim - Optionally set a node name if you’ll be images or lidar data from multiple sensors simultaneously (set node name to

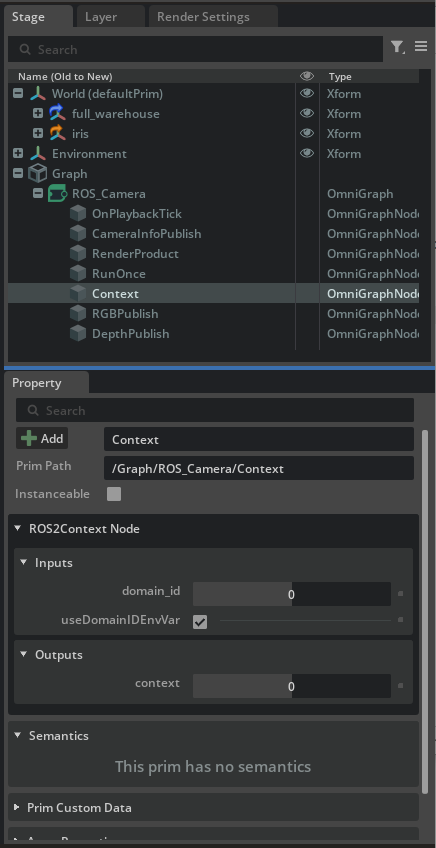

leftcam,rightcam…) - Click OK, and under the stage tree you’ll see a new element called

Graph. ExpandGraph → ROSCamera → Contextand double‑check that yourROS_DOMAIN_IDmatches the ROS2 Domain ID you’ll be using in the separate ROS2 shells/terminals.

Additionally, make sure to publish TF data with Tools → Robotics → ROS2 OmniGraphs → TF Publisher to be able to later visualize 3D data such as point clouds. Start by adding as Target Prim the body prim of the drone, and leaving the Parent Prim empty (defaults to /World). Click OK, and then create another ROS2 TF Graph with the target being the camera or the LIDAR sensor, and the parent being the body of the drone. Also check ‘Add to an existing graph’ which should add this to the first created world to body TF2 Publisher graph.

Historically you had to wire an Action Graph by hand; the newer method auto‑generate the graph. You can still inspect it under

Window → Action Graph.

5) Inspect Topics and Visualize Data

In a new terminal, run your usual tools:

# source it in a separate terminal, not the sim’s terminal:

source /opt/ros/humble/setup.bash

# Be sure the domain matches the sim’s terminal

export ROS_DOMAIN_ID=0 # It is already 0 by default

# Examples:

ros2 topic list

ros2 topic echo /whatever

rviz2

rqt

5.1 Foxglove

Foxglove offers a nice app and web viewer for quick dashboard visualization. Follow the Foxglove instructions here and enable its ROS 2 bridge:

ros2 launch foxglove_bridge foxglove_bridge_launch.xml

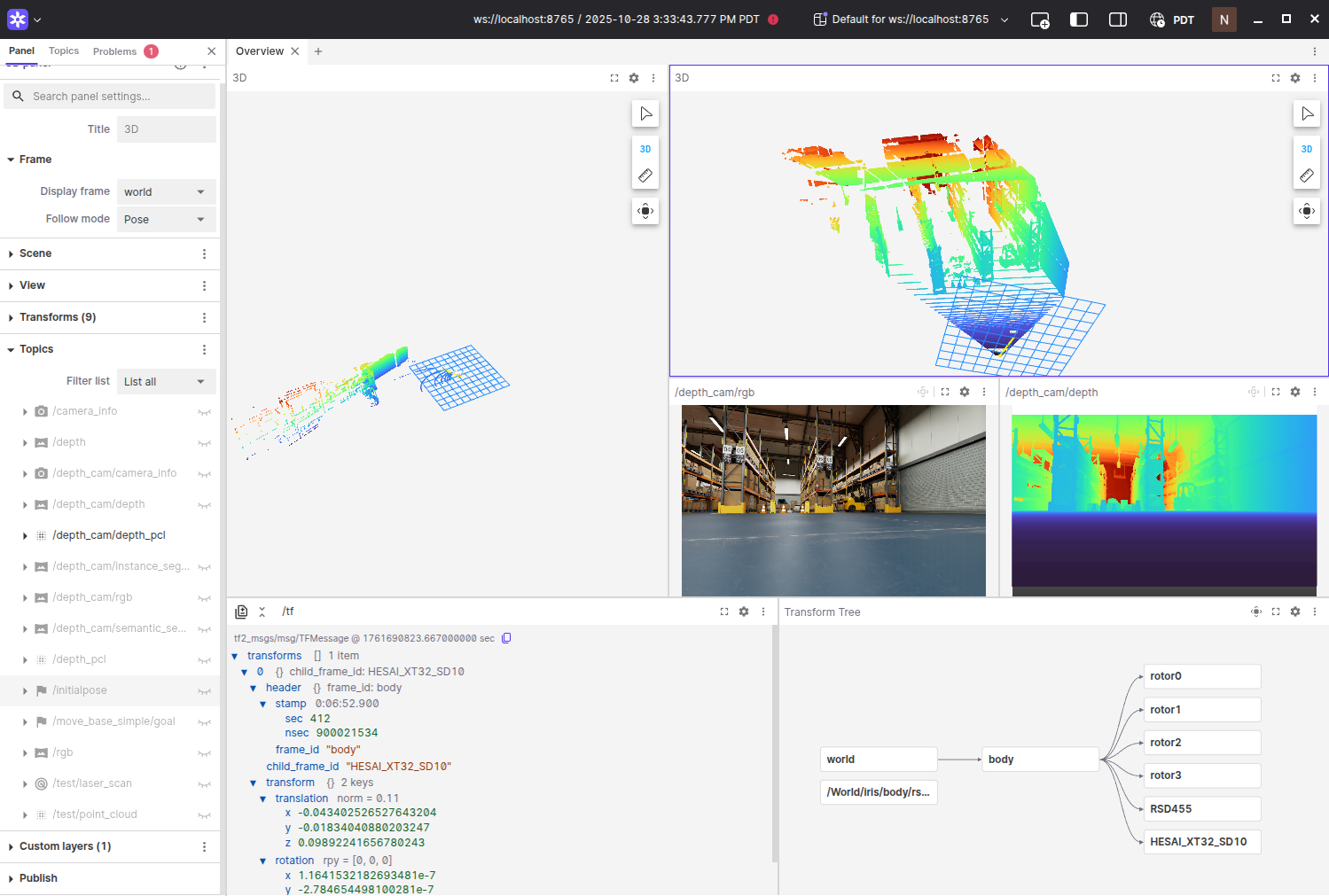

Then open Foxglove in the browser or app, add the WebSocket connection, and visualize rgb image, depth image, and 3D data overlays.

Visualizing Sensor Data and TF Tree in Foxglove.

6) Next Steps

Next steps will be to actually control the drone via ROS2 and set waypoints or teleoperate the drone manually to get data for running localization algorithms. And then test out the localization algorithms. Something to figure out as well would be to what extent high-fidelity outdoor environments can be imported (via a Gaussian Splats) for simulating outdoor drone autonomy.

7) References

- Requirements: https://docs.isaacsim.omniverse.nvidia.com/5.1.0/installation/requirements.html

- Quick install & downloads (5.0.0): https://docs.isaacsim.omniverse.nvidia.com/5.0.0/installation/download.html

- Compatibility Checker (NGC): https://catalog.ngc.nvidia.com/orgs/nvidia/containers/isaac-sim-comp-check

- https://github.com/PegasusSimulator/PegasusSimulator

- (Docs) https://pegasussimulator.github.io/PegasusSimulator/

Enjoy Reading This Article?

Here are some more articles you might like to read next: